BLUFF: Benchmarking in Low-resoUrce Languages for detecting Falsehoods and Fake news

BLUFF Framework Overview

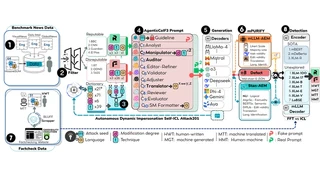

BLUFF Framework OverviewBLUFF is the largest multilingual fake news detection benchmark to date, spanning 79 languages (20 high-resource “big-head” + 59 low-resource “long-tail”) with over 202,000 samples. The benchmark combines human-written fact-checked content from 130 IFCN-certified organizations with LLM-generated content from 19 diverse models.

Key contributions include:

- AXL-CoI (Adversarial Cross-Lingual Agentic Chain-of-Interactions): A multi-agentic framework using 10 fake chains and 8 real chains for controlled multilingual content generation

- mPURIFY: A 4-stage quality filtering pipeline with 32 features across 5 dimensions, ensuring dataset integrity through asymmetric evaluation thresholds

- Bidirectional translation: English↔X coverage across 70+ languages with 4 prompt variants

- Comprehensive evaluation: State-of-the-art detectors suffer up to 25.3% Macro-F1 degradation on low-resource versus high-resource languages

Resources:

I am a PhD candidate in Informatics in the College of IST at Penn State University, where I conduct research at the PIKE Research Lab under the guidance of Dr. Dongwon Lee. I specialize in AI/ML research focused on Information Integrity, Safe and Ethical AI, including combating harmful content across multiple languages and modalities. My research spans low-resource multilingual NLP, generative AI, and adversarial machine learning, with work extending across 79 languages. I have published 12 papers with 260+ citations in premier venues including ACL, EMNLP, IEEE, and NAACL.

My doctoral research focuses on bridging the digital language divide through transfer learning, classification (NLU), generation (NLG), adversarial attacks, and developing end-to-end AI pipelines using RAG and Agentic AI workflows for combating multilingual threats. Drawing from my Grenadian background and knowledge of local Creole languages, I bring a global perspective to AI challenges, working to democratize state-of-the-art AI capabilities for underserved linguistic communities worldwide. My mission is to develop robust multilingual multimodal systems and mitigate evolving security vulnerabilities while enhancing access to human language technology through cutting-edge solutions.

As an NSF LinDiv Fellow, I conduct transdisciplinary research advancing human-AI language interaction for social good. I actively mentor 5+ research interns and teach Applied Generative AI courses. Through industry experience at Lawrence Livermore National Lab, Interaction LLC, and Coalfire, I bridge academic research with practical applications in combating evolving security threats and enhancing global AI accessibility. I see multilingual advances and interdisciplinary collaboration as a competitive advantage, not a communication challenge. Beyond research, I stay active through dance, fitness, martial arts, and community service.